Who is Nassim Nicholas Taleb and why listen to him?

Nassim Taleb is an author and scholar, and he used to be a financial trader. He’s also been professor at several universities, including now being Professor of Risk Engineering at NYU. He offers a new perspective for how we can deal with randomness, risk and uncertainty in our lives.

Near the end of his studies, Nassim Taleb went to the prestigious Wharton Business School in Pennsylvania where he listened to distinguished professors and top executives from big companies. Afterwards he worked for an investment firm in Manhattan where he worked alongside professional traders.

He was surprised to find out none of these people really know what is going on. The economists at the worlds best universities can’t predict where the market will go even with all their fancy models. And the professional investors can’t predict stock prices at all. They didn’t even seem to realize the massive risks they were taking every day, while acting like they were being conservative.

The 1987 Financial Crisis

Taleb knew an extreme and unpredictable event would happen, he just didn’t know when. But he created a financial strategy to prepare for it. Sure enough, less than 5 years after he graduated, in 1987 the stock market had the biggest drop in history. No economist or risk manager predicted it. The crash didn’t even have any clear cause, which must have been all the more distressing to them.

Yet on that day when everyone on Wall Street was in despair, Taleb says he slept like a baby. He’d made enough money to be set for life. From then on he was mostly free to focus on his study and writing.

Another famous graduate of Wharton is Donald Trump. In his book The Art of the Deal he didn’t seem very impressed by his professors either, saying “Perhaps the most important thing I learned at Wharton was not to be overly impressed by academic credentials.” If you want to hear more of Trump’s stories from the business world, then go read our summary of The Art of the Deal by Donald Trump.

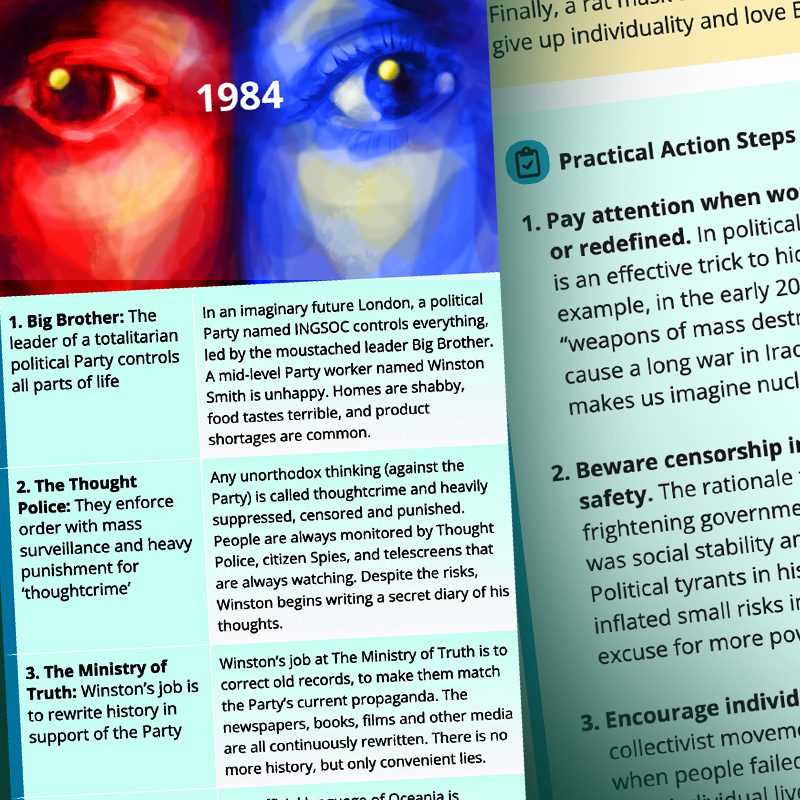

1. Black swan events are unprecedented, have a huge impact, but are later explained away as predictable

History and societies do not crawl. They make jumps. They go from fracture to fracture, with a few vibrations in between. Yet we (and historians) like to believe in the predictable, small incremental progression.

For most of history people only saw white swans. Everyone knew black swans didn’t exist, so calling something a black swan used to mean it was impossible.

Then in 1697 something totally unexpected happened. Some European explorers walking across Western Australia saw a living, breathing black swan. They were stunned. Later on, philosophers such as John Stuart Mill began using the black swan as a metaphor to talk about events which are totally unpredictable based on past evidence.

Nicholas Nassim Taleb took this idea one step further. He describes an event as a Black Swan when:

- It is totally unexpected AND

- It has a huge impact in the world AND

- People later give convincing explanations for why it happened.

Black swan events include:

- World War 2,

- The market crash of 1987,

- The spread of the internet,

- The 9/11 trade center bombings,

- The discovery of antibiotics

The Lebanese Civil War

Nassim Taleb was shocked by a black swan event early in his life.

He grew up in Lebanon, a country about half Christian and half Muslim. There had been peace in Lebanon for 1300 years. In school, kids were taught about how uniquely civilized and tolerant their culture was compared to others nearby. Then, when Taleb was a teenager, the unthinkable happened.

A bloody civil war broke out between Christians and Muslims in the country. Bullets and bombs exploded residential areas.

Adults told Taleb endless times that the war would be over “in a few days,” yet it lasted almost seventeen years. Taleb noticed that most people around him couldn’t predict anything about the war. But after the fact they could provide great explanations for why it all happened. The smarter and more educated someone was, the more logical their explanation sounded.

Later in life Taleb realized history is dominated by these unpredictable events that have a huge impact. Many black swan events have happened in the past near Lebanon. A couple thousand years ago, Christianity spread across the world. Today we all see Christianity as a fact of life, but it began as a totally insignificant cult, barely mentioned by historians at the time. In a different nearby area, 500 years ago Islam’s spread was even more blistering and unpredictable.

A black swan used to mean something impossible, until one day people discovered they actually existed. Now black swan is a term for events which are unprecedented, have a huge impact, yet most people later claim to know why they happened.

2. More and more, we live in Extremistan, an unequal world with unpredictable extreme outliers

Taleb says we are living in two separate worlds: Mediocristan and Extremistan.

Mediocristan is the world humans came from, where things are more equal and similar. It’s the natural world, the world our intuitions were designed for. Human height fits in this world. Imagine 50 random people. Some of them may be a couple feet taller or shorter than average, but it’s a relatively small difference.

A few more examples of things that exist in Mediocristan:

- Weight of animals or plants

- Grades in school

- Salaries in conventional jobs like welding, retail, medicine, etc.

Extremistan is the world we are moving into, where extreme outliers can have a huge disproportionate impact. It can be hard to wrap our heads around Extremistan. Human wealth fits into this world. Imagine 50 people again, this time we’re comparing their wealth and one of them happens to be a billionaire. The billionaire can easily have more money than the other 49 people combined! On the other hand, no person on earth will ever be TALLER than 49 people combined. That’s the big difference between Mediocristan and Extremistan.

A few more examples of Extremistan:

- Stephen King sells more books than thousands of other authors combined

- A Picasso painting sells for a thousand times more than a painting from an unknown artist

- Uber is more valuable than a thousand other tech startups combined

Mediocristan is where we must endure the tyranny of the collective, the routine, the obvious, and the predicted; Extremistan is where we are subjected to the tyranny of the singular, the accidental, the unseen, and the unpredicted.

Taleb believes we are increasingly living in Extremistan because of technology and global connectivity. This trend started hundreds of years ago and has only been accelerating with the inventions like printing press, television and the internet. He almost sounds like he predicted the 2020 coronavirus pandemic in this next quote:

As we travel more on this planet, epidemics will be more acute—we will have a germ population dominated by a few numbers, and the successful killer will spread vastly more effectively. Cultural life will be dominated by fewer persons: we have fewer books per reader in English than in Italian (this includes bad books). Companies will be more uneven in size. And fads will be more acute.

Mediocristan is the world we came from, where differences between individuals are relatively small (e.g. human height and weight). Extremistan is the world we’re moving into, where outliers have an enormous impact (e.g. human wealth or fame). Technology and globalization are speeding up this change.

3. We overvalue what we know, and are blind to what we don’t know

In 2002, the US Secretary of Defence Donald Rumsfeld was asked a question about the war in Iraq and he famously answered:

There are known knowns; there are things we know we know. We also know there are known unknowns; that is to say we know there are some things we do not know. But there are also unknown unknowns—the ones we don’t know we don’t know. And if one looks throughout the history of our country and other free countries, it is the latter category that tend to be the difficult ones.

—Donald Rumsfeld

Although this answer was at first made fun of by the press, it brought the idea of “unknown unknowns” into popular culture. Over time it has gained respect as a quote that succinctly expresses an important idea: that we must often make important decisions with incomplete information, while trying to take into account those invisible “unknown unknowns.”

Imagine a turkey that is being raised on a farm. Every day it gathers another piece of evidence that the farmer is a generous friend. There is no evidence to the contrary. However, after hundreds of days of the turkey’s increasing certainty of its safety, Thanksgiving comes. As Taleb puts it, on that day the turkey “will incur a revision of belief.” (By they way, Taleb borrowed this example from the philosopher Bertrand Russel.)

So the lesson here is: don’t assume absence of evidence equals evidence of absence. Just like the thanksgiving turkey, 2008 housing crisis and 9/11 terrorist attacks, events with enormous impact often happen without precedent.

Mistaking a naive observation of the past as something definitive or representative of the future is the one and only cause of our inability to understand the Black Swan.

Silent Evidence

Taleb has another name for those unknown unknowns—he calls them “silent evidence.” We all have a hidden bias in how we gather information to support our beliefs. We focus far more on the evidence which is easily visible than the silent evidence which takes some work to dig up.

For example, there is something called “survivorship bias.” This means we all tend to focus on successful individuals and examples. If you want to be successful, then obviously you listen to successful people and the reasons they give for their success, like hard work and persistence.

However, what about all those invisible people who never made it? They may have had just the same work ethic but they failed because of bad luck. We never hear stories of those people because nobody likes to publicize their failures and their biographies would never sell enough to be published anyway!

(By the way, Taleb says the best finance book he’s ever read is called What I Learned Losing a Million Dollars, a book that teaches from the experience of failure. He also points out they had to self-publish the book.)

So how can we reduce our blindness to silent evidence? By investing energy into finding counter examples. For example, don’t look only at the successful individuals, but all the individuals that started in a given group. Don’t just study the most successful startup in your city, but also look at all the failed startups that were launched the same year.

We underestimate the importance of what we are ignorant of—those “unknown unknowns.” Events often happen without warning or precedent, so don’t assume an absence of evidence is proof of anything. Invest energy shining light on silent evidence, by looking at examples of failure not only success.

4. People create stories about why Black Swans happened, which covers up how unexpected they were

Remember that Black Swan events are unpredictable, have a big impact, AND afterwards people can always find a good narrative to explain WHY they happened. It’s this last part that we’ll explore now.

Part of human nature seems to be we love to tell stories and find cause-and-effect patterns in the world, even where those patterns may not exist. Taleb calls this the Narrative Fallacy.

Stories help us to simplify the world. We remember information a lot more easily when it is told as a story. On the other hand, we find it very hard to remember information that is random and disconnected. For example, most of us can watch a 2 hour movie and easily explain what happened to a friend, but it would be very difficult to listen to a 2 hour math lecture and then repeat all the formulas we just heard.

Our education system is relies on stories. Think back when you learned about World War 2. The questions on the tests probably sounded like:

- “Why did the war start?”

- “Why did Hitler rise to power?”

- “Why did the Germans ultimately lose?”

But do we really know why all these things happened, or are we just finding a cause and effect that sounds reasonable?

Just because we can weave a convincing story after the fact, that doesn’t mean we really know why something happened! In truth, things often happen randomly, or they have invisible causes, or they have so many different causes we won’t figure it out.

The Berlin Diary – Looking Forwards Vs. Looking Backwards

A turning point in Taleb’s academic life was reading a book called “Berlin Diary” by journalist William Shirer. Shirer wrote this book as he was living in Berlin from 1934-1941. For a history book, it offers a unique perspective because all the entries are written as the events are taking place, without the benefit of hindsight.

From this book, Taleb saw the dramatic difference between historians who write about history after the fact versus people who experience it as it is happening. “The Berlin Diary” revealed the shocking fact nobody expected World War 2 to happen, not even people living right in the middle of Germany or France.

Most French people fully believed Hitler would fade out quickly, which is why the country was so poorly prepared for the Nazi invasion. Yet in books written much later historians talk about “increasing tensions” leading up to the war. In reality, it was a surprise to everyone.

Narrative Therapy

With narratives we easily trick ourselves into believing the world is more orderly and predictable than it really is.

In fact, Taleb suspects people use narratives as a form of therapy. If something unexpected and shocking happens, then we can piece together a story to explain why to feel more in control again.

The problem is, when we start to believe those stories about why past Black Swans happened, it makes us blind to how unexpected they were. As a result, we become less prepared for the next big one.

The more honest approach is to admit “we don’t know.” However, this is an answer many will find hard to accept. No teacher will accept that answer on a test, and no newspaper publisher is going to print that article.

Prediction not narration

The bottom line here is we shouldn’t rely on knowledge or theories created solely by looking backwards. Rather, we should do more empirical testing to make sure our knowledge proves itself in forward-looking predictions.

The way to avoid the ills of the narrative fallacy is to favor experimentation over storytelling, experience over history, and clinical knowledge over theories.

Stories help us simplify the world, remember things more easily and feel more in control of our future. However, this can create a false illusion of understanding and make us less prepared for the next black swan. It’s better to admit “we don’t know” and test our knowledge with empirical predictions.

5. Most experts mistakenly believe risk can be contained within their models

Experts in business and statistics pretend as if we live in Mediocristan. To forecast risk, they use tools like bell curves. In a bell curve, most things are around the average. The more of an outlier something is, the less impactful it is presumed to be. However, these models completely ignore black swan events, the rare outliers that have world-shaking impact.

Taleb calls this over-reliance on limited models either “Tunnelling” or “The Ludic Fallacy.” This problem is widespread across many social sciences, especially economics.

When the models inevitably fail, the economists simply say the cause of the crash was outside their area of expertise. Then they continue using the failed models as if nothing happened.

For example, Robert Merton Jr. and Myron Scholes both won the Swedish Bank Nobel Prize in Economics. In 1994, they helped open a large trading firm called Long-Term Capital Management. They hired the smartest people in academia and used cutting-edge mathematical risk models, which gave many investors great confidence in the firm. They had 3 years of success, then in the 4th year a financial crisis in Russia totally destroyed the company. To avoid a total economic collapse, the firm had to be bailed out by a collection of Wall Street banks and the Federal Reserve.

So you might assume the theories and models of those economists were thrown out, right? Wrong. The universities still teach them, saying it’s better to teach something that’s right a lot of the time, than teaching nothing at all.

Forecasting is (mostly) foolish

Nassim Taleb says that is absolutely foolish. It is better to not forecast at all than to drive according to the wrong map. And it is irrelevant whether the models are right most of the time. The important thing is when the models are wrong because Black Swan events have such a supersized impact.

I have never had an outlook and have never made professional predictions—but at least I know that I cannot forecast and a small number of people (those I care about) take that as an asset. There are those people who produce forecasts uncritically. When asked why they forecast, they answer, “Well, that’s what we’re paid to do here.” My suggestion: get another job.

Later in the book Taleb clarifies his position and provides some rules to reduce your chance of catastrophic errors in forecasting:

- If you must make a prediction, then at least forecast a range of outcomes that includes a wide margin of error.

- Base your strategy not on what you predict will happen, but on the worst case scenario you can reasonably assume is possible.

- Finally, try to keep your forecasts as short-term and small-scale as you can. It’s a lot more reasonable to predict what your income will be in 2 years than what the global economy will look like in 10 years.

“Platonifying” the world

Risk in real life is not clear-cut and sterile, like the mathematical models taught in universities. It is fuzzy and indefinite. Intellectuals often forget that the limited and artificial maps of the world they create are not reality.

Taleb calls this “platonifying” the world. He believes modern intellectuals, following in the footsteps of the philosopher Plato, have placed too much importance on abstract categories and elegant theories.

Robert Sapolsky is Professor of Biology and Neurology at Stanford, and he made a similar point in his book called Behave. He says scientists in different disciplines always interpret things according to the models they already know.

For example, if you ask a scientist to explain a human behavior like aggression, then a biologist will talk about hormones, a psychologist will talk about mental patterns and a sociologist will talk about cultural pressures. But Sapolsky believes paying too much attention to one category makes us lose sight of the big picture.

That’s why he tries to explain human behaviors from many points of view, including neurons, genes, hormones, childhood experiences, brain development, culture and evolution. If that sounds interesting to you, then check out our summary of Behave by Robert Sapolsky. It’s a very enlightening book.

Experts act as if their models work, even when they have failed countless times. The models can’t take into account outlier black swan events which have a supersized impact. It is better to not forecast than drive according to a wrong map.

6. A career in Mediocristan gives the appearance of stability, but hidden risk may be building up

Most people’s careers exist in Mediocristan. There is not a big difference between the highest paid and lowest paid workers in most fields. At the beginning of your career you are paid a stable weekly salary and 40 years later you might be making 1.5 or 2 times as much. Not a dramatic change.

However, careers in Mediocristan are not nearly as safe or stable as they appear to be. Just like that turkey before Thanksgiving, people in Mediocristan may be totally unaware when their jobs are becoming more and more precarious.

For example, manufacturing workers in North America had some of the best jobs… until most of the companies suddenly went overseas. The workers found themselves unemployed and totally unprepared for the modern job market after decades standing in one factory position. Taleb’s advice to those working in Mediocristan is to not overspecialize—make sure your skills can transfer to other companies if necessary.

Contrast that situation to someone who is self employed. They may have constant volatility, which means they have months they make a lot of money and months they can’t find new clients. However, this constant exposure to low-level risk makes them much more flexible to market changes.

Someone working a conventional career with a predictable path appears to have a very stable job, yet this may be an illusion. A self employed person is exposed to constant ups and downs, but this makes them more adaptable to big changes.

7. A career in Extremistan could make you wealthy, but requires working for years with no sure payoff

In Extremistan, people may work hard for years making little or no money, but they have the potential of a big payday in the future. A lot of creative workers and entrepreneurs exist here, including artists, writers, musicians, startup founders and the self employed.

If one of these people creates a hit music album, a technological breakthrough or the cure for cancer, then they could become obscenely wealthy. On the other hand, most of these people never get this huge payoff.

If you want to pursue a career in Extremistan, then you will face some unique challenges.

The first challenge is the human brain appears to be designed for steady and linear rewards. Just imagine a caveman or woman looking through the forests for food. They are motivated by hunger, then after a short time they find some berries. Eating them provides many pleasurable sensations as a dopamine reward. A weekly paycheck follows this same pattern. You spend a week working for a reliable reward that is coming in the near future.

On the other hand, working for years with no visible payoff is the exact opposite of what our minds were designed for, so it can be very discouraging.

Also, people around you may shame you for not appearing to be a contributing member of society. So Taleb says it’s very important to find a like-minded group that can support you and is on a similar mission. He mentions how throughout history, most great philosophers, thinkers and artists belonged to some school of thought they could find support and respect in.

People working in Extremistan include artists and entrepreneurs. There’s a potential big payoff, but most people don’t get there. To avoid being discouraged, find a group of like-minded people to find esteem in.

8. Entrepreneurs should rely on trial-and-error rather than top-down planning

Taleb is a big critic of top-down planning and says we need more bottom-up experimentation and trial-and-error to find what works. If a medical treatment works, doctors don’t need to understand why it works right away, they just need to give the treatment. In the same way, if a business idea is profitable, you don’t need to know the theory behind why.

In 1998, Nassim Taleb worked at a European-owned financial firm. They wanted to appear very stable and serious, so they had five top managers creating a detailed five-year plan over the summer. Then the Russian financial crisis of 1998 happened. Just one month after finishing their five-year plan, all five managers were not working at the firm anymore. It turns out that reality didn’t care about the plan.

Taleb says the firm should have known better than to waste time on a detailed plan. Their most profitable product had NOT been planned in advance but discovered accidentally. One of their customers had requested a very specific service and they later realized they could sell the same thing to other people.

The strategy for the discoverers and entrepreneurs is to rely less on top-down planning and focus on maximum tinkering and recognizing opportunities when they present themselves. So I disagree with the followers of Marx and those of Adam Smith: the reason free markets work is because they allow people to be lucky, thanks to aggressive trial and error, not by giving rewards or “incentives” for skill. The strategy is, then, to tinker as much as possible and try to collect as many Black Swan opportunities as you can.

Probably the most often recommended book for aspiring entrepreneurs is The Lean Startup by Eric Ries. In that book, he teaches to empirically test many small changes in your product so you can find out what customers respond to. It’s a method of scientifically testing to find the winning business strategy rather than relying on theories from experts.

Ries writes, “What differentiates the success stories from the failures is that the successful entrepreneurs had the foresight, the ability, and the tools to discover which parts of their plans were working brilliantly and which were misguided, and adapt their strategies accordingly.” To learn about his method for launching successful startups, go read our summary of The Lean Startup by Eric Ries.

To find a successful business strategy, we should do less theoretical planning beforehand and much more trial-and-error and experimentation.

9. Taleb’s financial strategy was to be very conservative and very aggressive at the same time

Most investors like to have a nice balance of risk and return. Not too risky so they lose money, but not entirely safe either because they want to grow their money too.

Taleb used a very different approach:

- 90% of his money went into extremely safe investments like Treasury bills. This way, if a Black Swan event happened, then this money would be safe. He views this almost like insurance. He can’t predict what the next negative Black Swan will be or when it will happen, but the majority of his money will be as safe as possible.

- The other 10% of his money went into very unsure bets, like towards venture capital firms that invest in new startups. Most of these bets will lose money, but if just one pays off, then he becomes incredibly wealthy. He can’t predict which specific company or investment will pay off, but in this way he creates many opportunities for a positive Black Swan to enter his life.

Rather than seeking balance, Taleb put 90% of his money into extremely safe investments, to protect him from negative Black Swans. The other 10% went into very risky investments, to expose him to the potential explosive payoff of a positive Black Swan.

Conclusion

I hope you’ve enjoyed this summary. This was one of the most thought-provoking books I’ve read recently, putting into question a lot of what we think we know about the world.

With recent unprecedented events like the 2008 financial crisis, 2016 election of Donald Trump and 2020 coronavirus pandemic, I feel this book is more relevant than ever before. And perhaps we are accelerating into Extremistan, so we’d better prepare.

Community Notes